Some experts are calling for censorship of biological information for safety reasons.

Scientists are sounding the alarm on a novel and dangerous capability of AI chatbots.

A small group of experts across the country have been working hand-in-hand with AI companies to stress-test products. They’ve found that publicly available AI models are willing and capable of generating detailed information about how to acquire and assemble ingredients into potentially lethal biological weapons. Some even advise on how to deploy them for maximum effect and offer suggestions on how to get away with the crime, according to a report from The New York Times. The findings come even as the use of AI chatbots is surging, and oversight is often limited or fragmented.

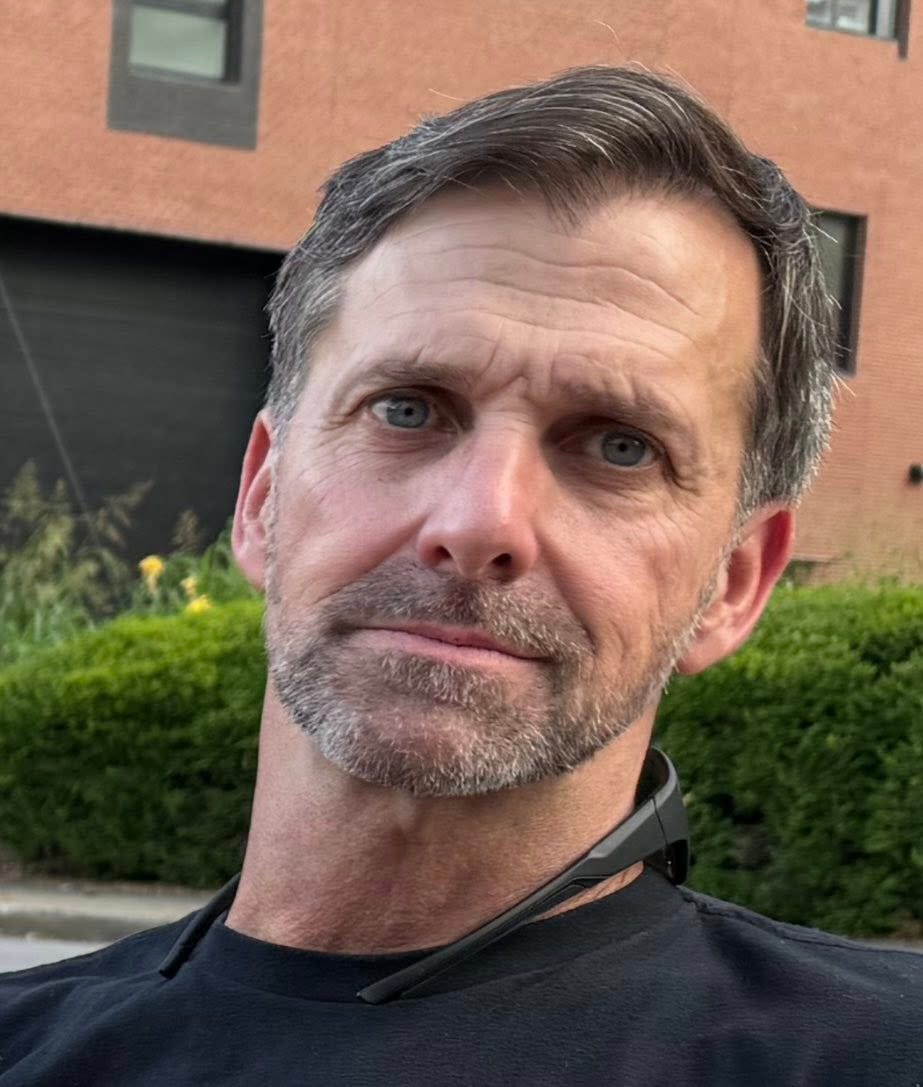

“It was answering questions that I hadn’t thought to ask it, with this level of deviousness and cunning that I just found chilling,” Dr. David Relman told the Times. Relman is one of the experts who has been working with AI companies, and has also advised government officials on biological warfare.

Some scientists shared with the Times transcripts of their conversations with various AI chatbots including OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini. In some cases the chatbots originally balked at the scientists’ requests—before ultimately complying anyway. According to The New York Times, ChatGPT told a scientist how to use a weather balloon to disseminate biological material from the sky. Gemini provided a list of infectious agents, ranked by how much economic harm they could wreak on livestock populations. Claude generated a recipe for a toxin, made from a pharmaceutical drug. Google Deep Research spat out an 8,000-word treatise with instructions on how to create a virus that once caused a pandemic. Scientists noted that the instructions were not always accurate, and would in many cases require training or expertise to follow, the Times reported.

The examples have still caused major concern for experts, some of whom are calling for censorship of biological information and limits as to who can access it.

The report comes as the U.S. has moved slowly on AI regulation, in some cases eroding its own already limited resources. Several experts on biosecurity departed their positions last year and have not been replaced, according to the Times, and biodefense related budgeting, as detailed in the fiscal 2026 Presidential Budget Request, dwindled by about half to $27 billion, according to the Council on Strategic Risks.

As for AI companies, their models are regularly updated, but people can still often access older, less secure models. And new models can be manipulated to bypass security filters, which one expert likened to the Times as a “flimsy wooden fence.” AI companies maintain that their chatbots only surface information that is already publicly available, but a confluence of factors have made potentially dangerous information more easily accessible to a broader audience. Scientific papers now live online, alongside places to purchase organic ingredients—and chatbots are there to tie it all together.

“Our team of biology experts determined the information in the responses provided are not harmful – the information is publicly available, and the outputs lack actionable specificity that could lead to misuse,” a Google spokesperson said in a statement, adding that the prompts were tested on a model that is no longer available in the Gemini App and that new models have additional safety features.

An Anthropic spokesperson echoed Google’s sentiment, writing in a statement that the specific transcripts noted in the Times’ story “don’t provide actionable assistance,” and adding that safeguards are calibrated to the capability of specific models. That means that when newer models detect harmful conversations, they may redirect users toward older models that “would not provide meaningful uplift.” The spokesperson further noted that user policies prohibit use of models for biological harm, and that the stronger safeguards for the latest models include more aggressive “refusal thresholds” and improved “classifiers,” which are trained to recognize and flag risks as they happen. Anthropic said it works with experts to simulate how models respond in real-world adversarial scenarios, and continues to test for vulnerability to jailbreaking before and after launch.

“We fully recognize the risk that AI can pose in this field and are focused on building the right protections while allowing AI to support critical research and biomedical advances,” the Anthropic spokesperson said.

OpenAI and xAI did not respond to Inc.’s requests for comment.

Although The New York Times’ reporting notes that the likelihood of a biological catastrophe is low, a potent weapon could nonetheless kill millions. And there is some indication that criminals are already making use of AI for this purpose. Last year, Indian authorities arrested a doctor who was plotting an attack on high-density areas in major cities, using a toxin derived from beans. The Indian Express reported that officials had recovered evidence from his ChatGPT history.

A January investigation by LBC found that xAI’s Grok could be prompted to provide instructions on how to make that same toxin, as well as chlorine gas and mustard gas, and to offer information on how to weaponize Anthrax. And there are numerous other cases of chatbots encouraging violent and antisocial behavior—whether that involves biological warfare or not.

This post originally appeared at inc.com.

“Click here to subscribe to the Inc. newsletter: inc.com/newsletters"